THE GREAT AMERICAN BULLSHIT MACHINE

There is a moment, usually around your third cup’o’joe, when you look down at the glowing rectangle in your hand and realize the thing has been feeding you for twenty minutes without you knowing what you actually ate. You scroll. You swipe. You stop on a meme of a senator whose face has been welded onto the body of a raccoon. You laugh. You almost believe it. Then you catch yourself, and the question hits like a cold wave: how much of what I just saw was real?

I ran a small experiment this week. I opened every app, bookmarked every “real story behind,” screen-grabbed every gotcha meme, every shocking statistic, every outraged take that came thundering down the feed between Monday morning coffee and Friday night bourbon. Then I fact-checked them. The results would not surprise George Carlin, who spent his career pointing at what he called American bullshit and asking why we keep eating it with a spoon. Roughly nine out of ten items were, to use the technical term, pure unrefined nonsense. Prime cut. Grade A. High quality. Fake.

This is not a rant. I am pragmatic. I run a marketing shop. I sell on these platforms. They put food on the table. But the gap between what the feed is doing for us and what the feed is doing to us has become too wide to ignore, and anyone telling you otherwise is probably trying to sell you a course on how to beat the algorithm.

Let me be crystal clear on one thing right up front. I am not calling for a social police force. I do not want a federal bureau of meme inspectors. I do not want a government official reading over my shoulder, deciding what I can post, share, or laugh at. Free usage, free speech, free country. What is, is. The First Amendment still runs the joint, and it should.

The question is not whether we censor the public square. The question is why, in a world where I can filter spam out of my email with one click, I cannot filter out bots, AI slop, and algorithmically juiced rage bait from my own feed. Give the user the off switch. Let me tell Instagram: no AI-generated images, no accounts less than thirty days old, no posts flagged as synthetic. Let me tell Facebook: show me the friends I chose, in the order they posted, and nothing else. That is not censorship. That is a coffee filter. The fact that the platforms fight it tooth and nail tells you exactly whose experience they are optimizing for, and it is not yours.

The good news is the free market started filling the gap while the platforms looked the other way. Browser extensions like AI Content Shield will block AI-generated posts across X, Instagram, Facebook, Threads, Reddit, Pinterest, and LinkedIn. Kagi’s SlopStop lets users report and mute AI sludge across the web. DuckDuckGo now has a setting at noai.duckduckgo.com that keeps AI-generated images out of your search results. A volunteer-maintained uBlock Origin blocklist catches more than a thousand AI content farms. None of this is a full solution. All of it is more than the platforms themselves offer. Tells you something.

So let us take a cold, rational look, the kind Steven Pinker would take with a pencil behind his ear, and add a measure of the compassion the Dalai Lama keeps reminding us to carry. Then let us admit what we see.

THE NUMBERS, FOR THE PEOPLE WHO STILL BELIEVE IN THEM

Start with the simple stuff. The 2025 Pew Research Center report on Americans and social media found that YouTube and Facebook remain the dominant platforms in the United States, with roughly half of adults visiting each every day. About one in four American adults uses TikTok daily. Among adults sixty-five and older, Facebook is running at fifty-seven percent usage, and YouTube has climbed to sixty-four percent. Grandma is on the feed too. She has questions.

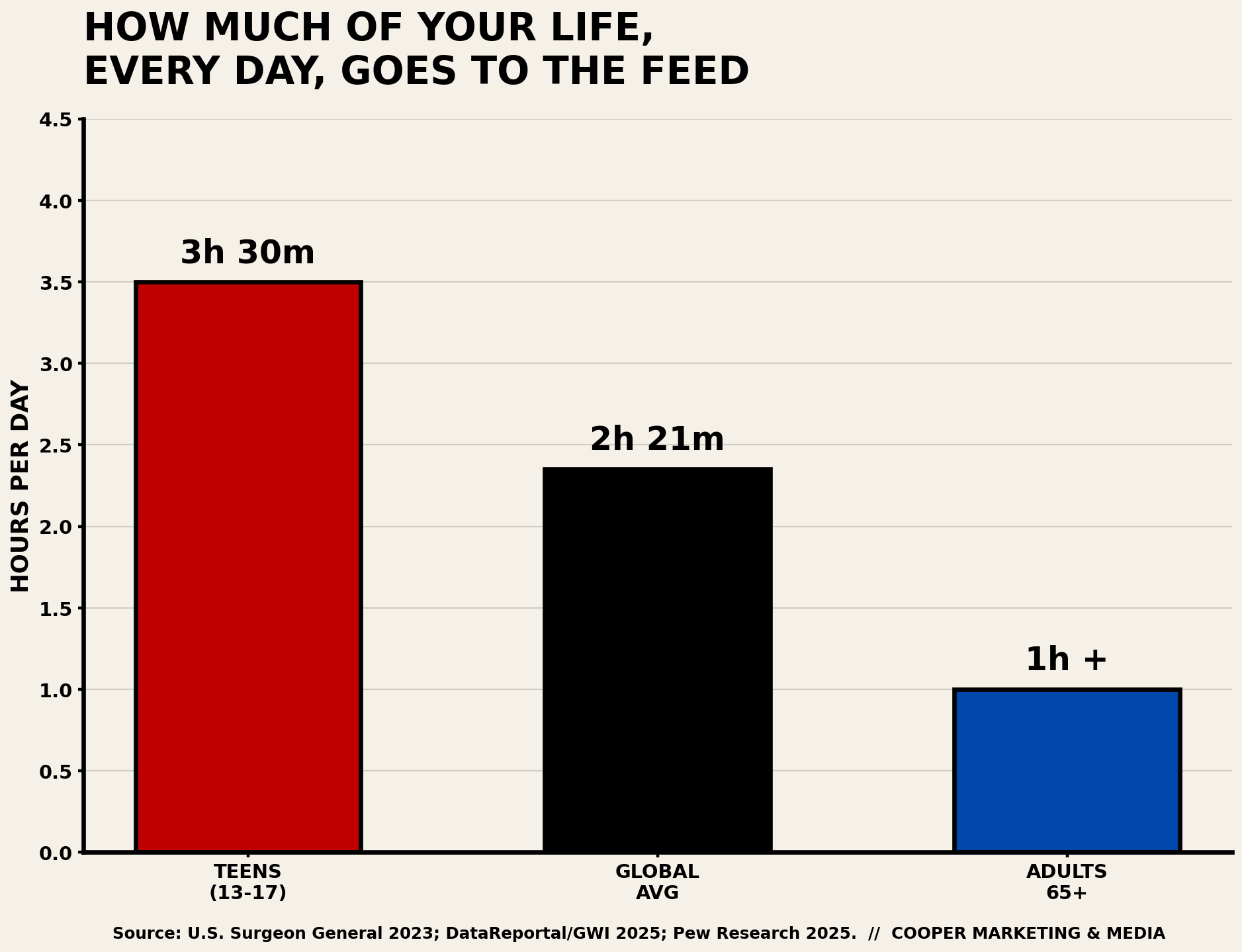

Teens are the ones to worry about. 95% of kids between 13 and 17 report using a social media platform, and more than a third say they use it “almost constantly,” according to the 2023 U.S. Surgeon General's advisory on social media and youth mental health. Teens who spend more than three hours a day on these platforms face roughly double the risk of depression and anxiety symptoms. The average is 3.5 hours. Do the math and weep quietly into your napkin.

Globally, DataReportal and GWI reported that the average internet user spent 2 hours and 21 minutes on social media every day in early 2025. That is a hundred and forty-one minutes of your one wild and precious life, handed over to an ad auction, every twenty-four hours, whether or not you remember agreeing to the trade.

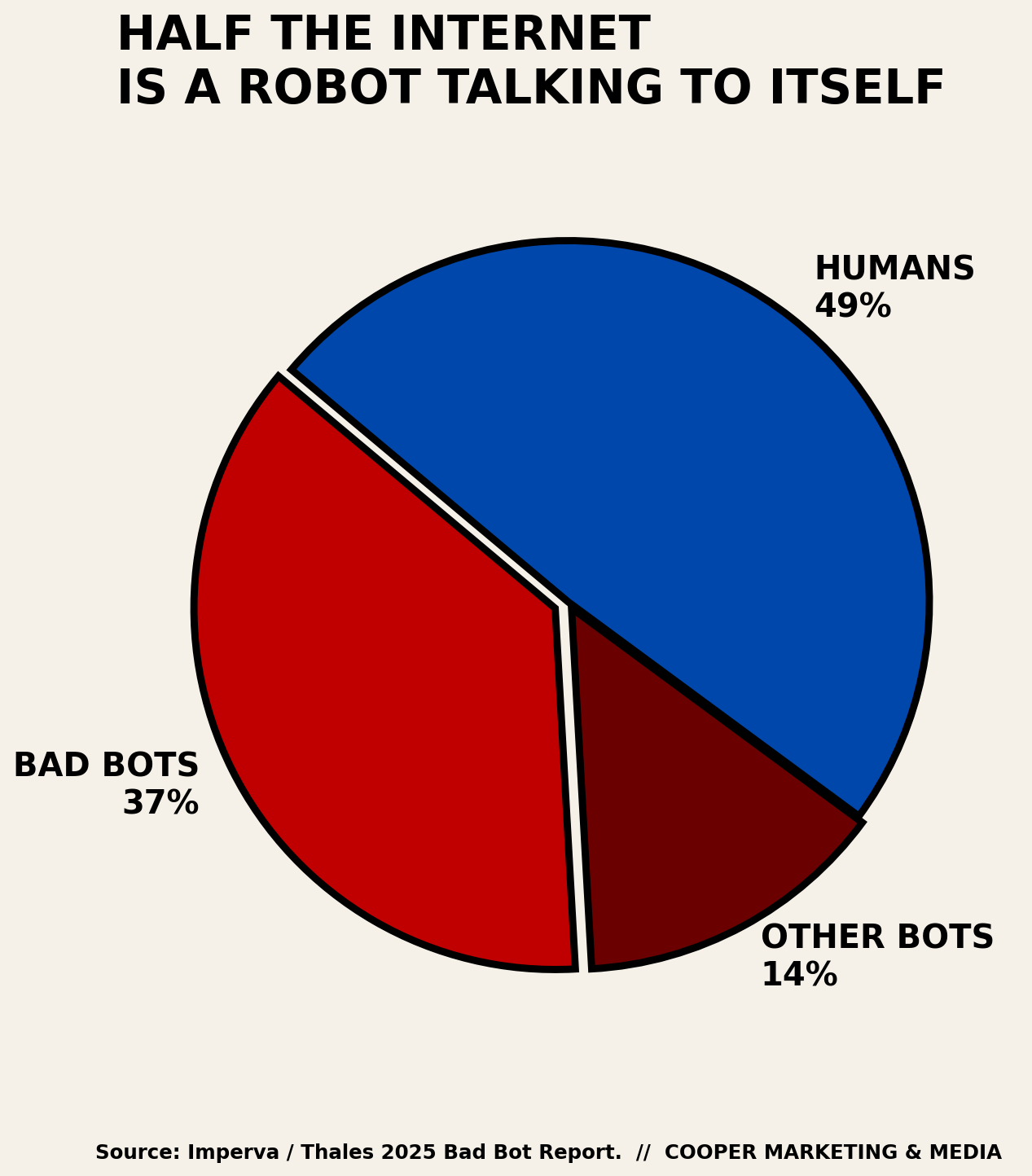

Here is the kicker from the Imperva 2025 Bad Bot Report. Automated traffic, meaning bots, now accounts for more than half of all internet traffic, and bad bots alone account for thirty-seven percent. The robots are winning. Half of what you think is a conversation is a machine talking to another machine while you pay for the electricity.

WHO IS WATCHING THE WATCHMEN? MOSTLY, NOBODY.

This is the part that makes me want to start drinking earlier. The honest answer to the question “who regulates social media?" is "nobody, really, not in the way you think.” The Federal Communications Commission does not regulate social media platforms. The Federal Trade Commission can chase platforms for deception or data breaches. The Department of Justice can prosecute actual crimes. Section 230 of the Communications Decency Act still shields platforms from most liability for user-generated content, though in April 2025, the DOJ Antitrust Division hosted a forum where officials from the DOJ, FTC, and FCC openly floated the idea of rewriting the whole thing. Nothing has actually changed yet. The fence is still down.

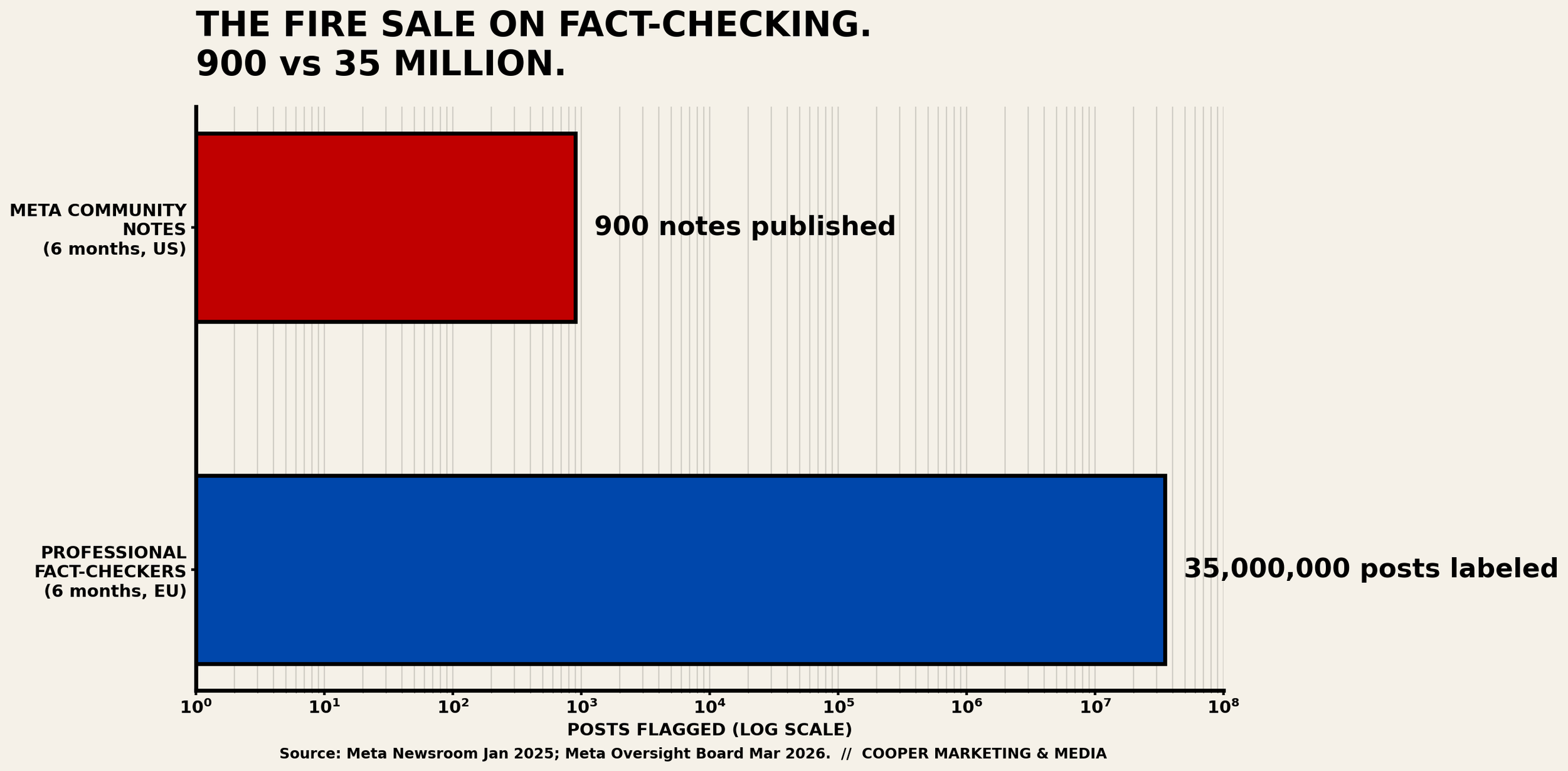

Fact-checking, meanwhile, just got a field demotion. On January 7, 2025, Meta announced it was killing its third-party fact-checking program, which had worked with more than ninety International Fact-Checking Network partners reviewing content in sixty languages since 2016, and replacing it with a crowd-sourced Community Notes system modeled on the one at X. Meta’s own Oversight Board has since warned that Community Notes is not an adequate substitute, particularly in elections, crises, and repressive regimes. Early numbers are brutal. In the first six months of the U.S. rollout, Meta’s platforms published roughly nine hundred Community Notes. In the same six-month window, professional fact-checkers had previously labeled around thirty-five million posts across the European Union under the old system. Nine hundred versus thirty-five million. That is not a pivot. That is a fire sale.

So who polices fraud, abuse, and influence operations? The platforms themselves, when they feel like it, subject to whatever mood the executive suite happens to be in that quarter. The algorithm, which no outside auditor fully sees, decides what you see and how often you see it. The only real check on the machine right now is the machine.

POLITICS AS A CONTACT SPORT

The 2024 election cycle gave us the field test nobody asked for. NYU Stern’s Center for Business and Human Rights concluded that the primary tech-related threat to the election was not AI-generated deepfakes but the ordinary, large-scale spread of false, hateful, and violent content across social platforms. A University of Massachusetts Amherst study tracked how Facebook’s algorithm was successfully tuned down in 2020 to mute political and harmful posts, and how later changes reversed many of those gains. A 2025 ACM Fairness, Accountability, and Transparency paper audited X’s algorithm during the 2024 race and found measurable political exposure bias baked into amplification. A controlled ten-day experiment with over twelve hundred voters, published the same year, showed that simply varying exposure to anti-democratic and partisan-hostility content produced measurable shifts in polarization.

Translation, in plain English: the algorithms are not neutral plumbing. They push. They nudge. They decide whose outrage gets a megaphone and whose gets a whisper. And the people operating those levers are not required to tell you what they changed this morning.

That is the nefarious manipulation of the masses, and it does not require a secret smoke-filled room. It requires only an engagement metric and a quarterly earnings call.

WHO IS DRIVING THE BUSES?

A reasonable person will ask: Okay, who actually has a hand on the wheel? Short list. Elon Musk owns X, runs Tesla and SpaceX, is flirting with his own AI company called xAI, and as of 2025 sits at the top of the billionaire rankings with a net worth somewhere north of three hundred billion dollars, depending on what Tesla did this morning. He has more than two hundred million followers on the platform he owns. Mark Zuckerberg runs Meta, which owns Facebook, Instagram, WhatsApp, and Threads, with a personal net worth around two hundred billion. Sundar Pichai runs Alphabet, which owns Google, YouTube, and most of the ad-tech plumbing the entire internet runs on. Sam Altman runs OpenAI, valued at roughly five hundred billion dollars in late 2025, which is the engine behind ChatGPT and a big chunk of the synthetic content flooding your feed. Dario Amodei runs Anthropic, OpenAI’s most credible rival in the AI race. Evan Spiegel runs Snap. Shou Zi Chew runs TikTok, which is ultimately answerable to ByteDance in Beijing, which is answerable to the Chinese Communist Party, which is a sentence that should make everyone uncomfortable regardless of political stripe.

That is the room. Six to eight people, most of them American, one of them not, nearly all of them billionaires, steering the informational bloodstream of roughly five billion humans. That is not a conspiracy theory. That is the org chart.

How do we monitor their agendas and influence? Honestly, badly. We read their tweets. We read their congressional testimony when we can get them there. We read leaked internal memos when a journalist pries one loose. We track their political donations, which in 2025 included Zuckerberg, Pichai, Altman, and Cook each writing a roughly $1 million check to the Trump inauguration fund, as well as Bezos. That is public record. That is also a data point.

Are they purposely setting our course and speed? Some of them, clearly yes. Musk has used his platform to amplify specific political content, deplatform specific accounts, and personally lobby on legislation. He has also moved markets with a single post. Academic research from Princeton and others has documented that his tweets have produced measurable, sometimes double-digit, swings in Tesla's stock price and even larger swings in cryptocurrencies like Dogecoin. The SEC has previously fined him over tweets it deemed market-manipulating. One man. One post. Real money gone or made in minutes.

Can they move markets and cause panic? Yes, and we have the receipts. Can they sway elections? Probably at the margins, and margins decide elections. Do they do it without meaningful oversight? At the moment, mostly yes. That is the honest answer, and it should bother you whether you like these guys or hate them.

How do we know what is real and what is not? We triangulate. We cross-check against sources that get things wrong and then publish corrections, because that is actually the defining feature of real journalism. We learn to spot the tells of AI-generated images (hands, ears, background text, weird lighting). We assume any screenshot without a traceable link is theater until proven otherwise. We remember that the most emotionally satisfying version of a story is usually the least accurate one. None of this is easy. All of it is necessary.

THE MONEY, BECAUSE OF COURSE

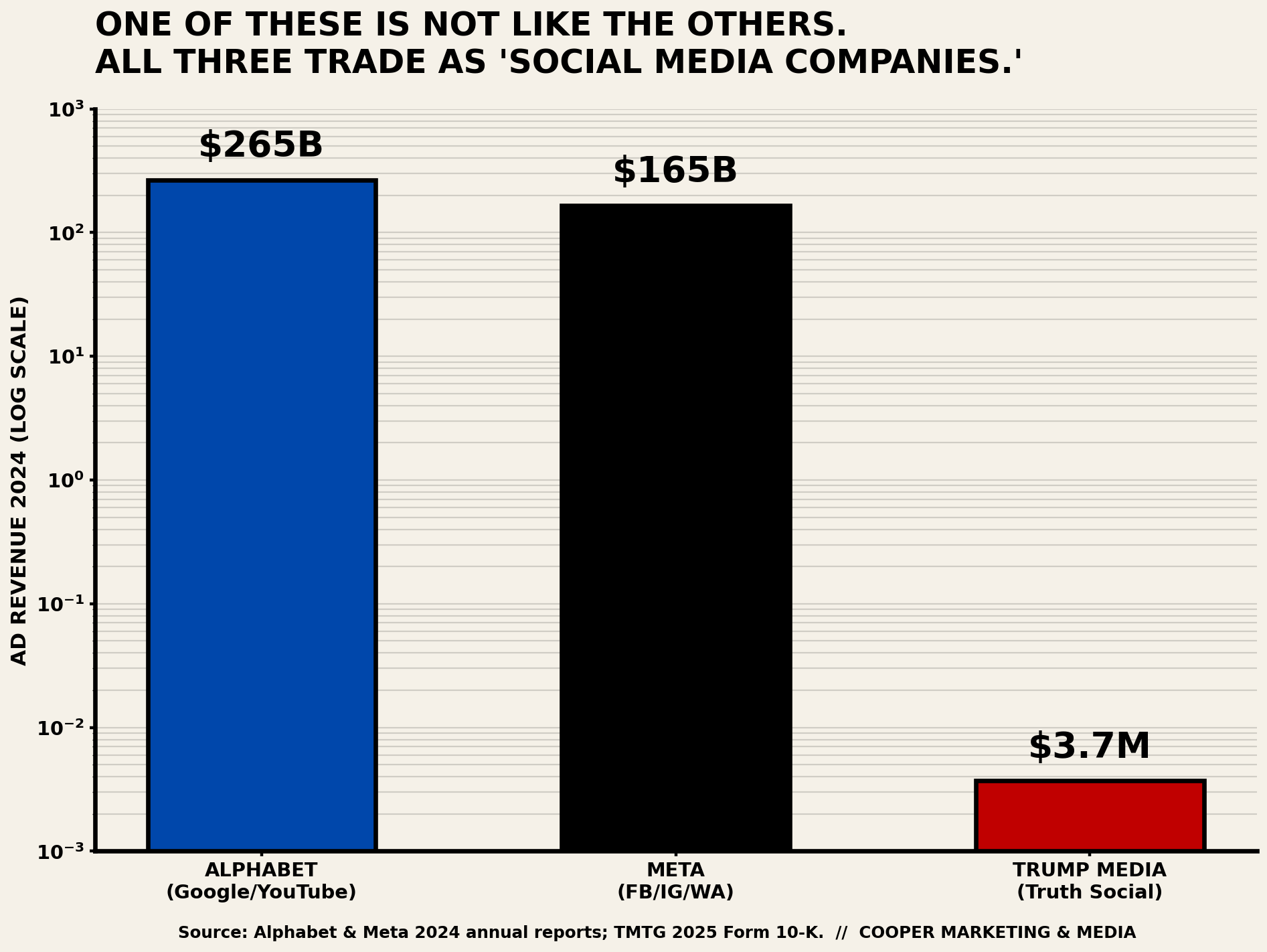

Let us follow it. In 2024, Alphabet (Google and YouTube) generated roughly $265 billion in advertising revenue. Meta (Facebook, Instagram, WhatsApp, Threads) pulled in approximately 165 billion in ad revenue in the same year. In Q3 2025 alone, Alphabet reported around 74 billion in ad revenue, and Meta reported around 50 billion, with Meta growing over 25% year over year. Global ad revenue across all players is on track to exceed $1 trillion annually, dominated by Google and Meta.

And the contribution back to society? The Chan Zuckerberg Initiative spent roughly $500 million on philanthropy in 2024 and holds around $9 billion in combined assets, with a focus mostly on biomedicine, education, and, increasingly, AI-accelerated science. That is genuinely good work. It is also a rounding error against Meta’s annual ad haul. If you made a hundred and sixty-five thousand dollars a year and gave away five hundred bucks, nobody would be building you a statue.

So yes, they contribute. They also extract. The ratio matters.

AND THEN THERE IS TRUTH SOCIAL

Full disclosure before I say another word. I am not on Truth Social. I have never posted on Truth Social. I would not want an account on Truth Social. But in the name of honest reporting, I looked under the hood for this piece, and what I found was, to borrow my own phrase from the top of this essay, wow. Oh, just wow. It’s a lot of noise. It is one-sided banter. It is the exact biased BULLSHIT this entire Op-Ed is about, just distilled into a single container and served warm. Fake memes, recycled outrage, and one very loud voice at the center of all of it. I will not pretend otherwise, because doing so would make me part of the problem.

Now, the part that nobody talks about enough. The financials. Let us treat Trump Media & Technology Group (ticker: DJT), the parent company of Truth Social, the same way we just treated Meta and Alphabet. Numbers on the table, no spin.

Monthly active users in 2025 hovered around 6 million, with wide month-to-month swings. For context, Facebook is around three billion and X is around four hundred and twenty million. Truth Social has roughly 0.2% of Facebook’s audience. That is not a typo. That is the scale.

Revenue, per the company’s own 2025 10-K filing with the SEC: three point seven million dollars. For the entire year. All of it. Meta and Alphabet each generated more advertising revenue every 30 seconds than Truth Social did in 12 months. Net loss for 2025: seven hundred and twelve million dollars, though the company is careful to point out that most of that is unrealized paper losses tied to crypto-related securities it now holds on its balance sheet. Strip out the non-cash items, and the company managed a small positive operating cash flow last year, which is technically a milestone and a fairly low bar to clear.

And yet. As of early 2026, Trump Media & Technology Group has a market capitalization between 2.5 and 3.5 billion dollars, depending on the week. Let us do the arithmetic. A company doing less than $4 million in revenue a year, losing hundreds of millions on paper, is valued at the multi-billions. That is a price-to-revenue ratio of roughly 700 to 1. Most real software companies trade at ten to twenty times revenue. Something else is being priced in here, and it is not the advertising business.

What is being priced in, in plain English, is proximity to political power. The company is majority-owned by the Donald J. Trump Revocable Trust. The platform is the President's direct megaphone. That is not a subtle conflict of interest. That is the whole structure. A publicly traded company whose main asset is a social network that functions, in practice, as the personal broadcasting channel of the Commander in Chief, while retail investors buy and sell the stock based on how his week is going. If you proposed that setup in a screenwriting class, the professor would tell you it was too on-the-nose.

Is Truth Social doing anything the other platforms are not? Not really, except louder and from a smaller base. Same algorithm incentives. Same engagement-by-outrage business model. Same opaque moderation. Same thin financial fundamentals dressed up as a movement. The difference is that when Facebook boosts rage content, it is because the quarterly earnings call demanded it. When Truth Social boosts rage content, it is because rage content is the point, and the loudest voice on the platform is also the person with the nuclear codes. That distinction matters, and it does not flatter anyone.

None of this is a partisan observation. The numbers are in the SEC filing. The user base is in the analytics. The ownership structure is in the proxy statement. The content, if you care to look, is on the platform itself for anyone with a free afternoon and a strong stomach. I looked. I am reporting. You can decide.

THE ALMIGHTY USER AGREEMENT YOU AUTO-SIGNED AT 2 A.M.

Now for the part nobody reads, which is, of course, the part that matters most. When you tapped “I agree” at three in the morning on your twelfth redesign of the Instagram terms of service, you signed a contract. That contract is legally binding, and courts have upheld clickwrap agreements even when the user never actually read them. That is not an opinion. That is settled law.

Here is what you actually agreed to, in plain English. You still technically own your content. Congratulations. What you granted the platform, in exchange for the free app, is a non-exclusive, royalty-free, transferable, sub-licensable, worldwide license to use that content. Read those adjectives again slowly. Non-exclusive (they can share it). Royalty-free (you get nothing). Transferable and sub-licensable (they can hand it to anyone they want). Worldwide (everywhere). That license, under Meta's current terms, includes the right to use your photos, videos, and text to train their AI systems, run their advertising models, and repurpose your content in promotional material. You agreed to all of it. Around minute seventeen of waiting for the app to update.

Can these sites catalog all the data you freely share, photos, locations, DMs, reactions, the time of day you tend to open the app, and the exact second you scroll past versus pause? Yes. All of it. They already do. Your DMs are not private in the way a sealed letter is private. They live on company servers, covered by server-side encryption (sometimes) and the company's own read access (usually). End-to-end encrypted messages like Signal or standard WhatsApp chats are a different category; ordinary Instagram and Facebook messenger DMs are not in that category by default.

What is actually protected? In the United States, HIPAA covers your medical records in a doctor’s office, not your Facebook posts about your health. FERPA covers your school records, not your TikTok rant about your teacher. COPPA restricts data collection on kids under thirteen, which the platforms love to quietly ignore until the FTC fines them. There is no comprehensive federal privacy law in this country. California, Colorado, Virginia, and a few others have their own state-level versions. Europe has GDPR, which is stricter, and that is why you keep seeing cookie pop-ups. That is roughly the entire fence. There are a lot of gates in it.

Can you post a disclaimer that says “This is mine, you can’t use it,” and make it stick? No. That viral copy-and-paste notice your aunt posts every other year is legally worthless. You already granted the license when you signed up. A public post that contradicts your own terms of service does not override your terms of service. Sorry, Aunt Linda.

Is Alexa listening? Is Siri listening? Is your phone listening? The honest technical answer is more interesting than either “yes” or “no.” Research out of Northeastern University found that smart speakers can be falsely activated by more than a thousand common words that sound vaguely like wake words, and that audio snippets from those false activations get uploaded to cloud servers where human contractors have reviewed them. A separate Northeastern persona study showed that interactions with Alexa produced targeted ads for those personas elsewhere on the web, and Amazon has since confirmed it serves interest-based ads based on Alexa requests. Apple tightened Siri’s policies after a 2019 contractor-review scandal and now does more processing on-device. Google has done a similar cleanup. But the general answer is: these devices are listening for their wake word continuously, they sometimes think they heard it when they did not, and some of what they then capture becomes training and ad data. That is not a tinfoil-hat theory. That is the published literature.

How did that Nike ad pop up right after you mentioned running shoes out loud? Probably not because your phone recorded your voice. More likely because you, your spouse, or your friend group searched Nike earlier this week, because Facebook and Google stitch your ad profile to everyone in your household through shared IP addresses, cookies, and cross-device graphs, and because the platforms know you are a forty-ish guy in Tahoe who just Googled a ski pass. The targeting is that good without the microphone. That is arguably scarier than if they were just bugging your living room.

How do you know if a profile is real? Check the join date. Check the post history. Reverse image search the profile photo. Count the followers versus the number of posts (a brand new account with ten thousand followers and three posts is almost always a bot or a scam). Notice grammar that is too perfect, too weird, or both. If it feels like it is trying too hard to make you angry, it probably is, because outrage content is what the bot farms are built to produce.

How do you protect your own? Lock down your privacy settings once a year (they keep resetting). Use two-factor authentication everywhere. Stop posting real-time location to public feeds. Use a password manager. Assume anything you put on a platform could eventually become public, searchable, and permanent, and post accordingly. You are not being paranoid. You are being an adult.

“CAN I JUST POST: CHANGE MY ALGORITHM SO I SEE MY FRIENDS?”

Short answer: no. Slightly longer answer: also no, and the post you are thinking of is one of the oldest Facebook hoaxes still in circulation. You have seen it. Your aunt has posted it. Your cousin has posted it. They are stoked. They copy-pasted it off a friend's page because it sounded official, and now they are convinced Facebook will finally start showing them their actual friends again. Bless them.

Here is the actual post, more or less. "Here is how to bypass the new Facebook algorithm. Facebook only shows you posts from twenty-five or twenty-six friends. Copy and paste this (do not share) and leave a comment so we can see each other's posts again." Sometimes the number is fifteen. Sometimes it is a mysterious reference code like "OO5251839." Sometimes it name-drops Mark Zuckerberg. The numbers change. The pitch does not.

Every single piece of that is false. Snopes has debunked it repeatedly. Facebook itself has publicly stated, in an official newsroom post titled “Inside Feed: Facebook 26 Friends Algorithm Myth,” that there is no twenty-six-friend limit, no cap on how many people can appear in your feed, and no magic copy-paste incantation that changes the ranking of your content. The post has been circulating since 2017. It does not work. It has never worked. It will not work tomorrow.

So why does it feel like it works? Because of a real but unrelated mechanism, and this part is interesting. When someone posts that hoax and asks people to leave a comment, a handful of their actual friends obediently type “hi” or “yes” underneath. Facebook’s ranking system absolutely notices that engagement, and from that moment on it is slightly more likely to show those two people each other’s content. But the magic word was not in the pasted text. The magic word was the comment itself. You could have commented on a photo of their dog and gotten the exact same result. The hoax is stealing credit from a completely ordinary feature called “engagement signals,” which is how every social feed on earth has worked for a decade.

Here is what actually does change what you see. Like, comment, and share posts from the people you want to see more of. Hide posts from the people you do not. On Facebook, use the “Favorites” feature (Feeds, then Favorites) to build a list of up to thirty accounts whose posts you will see in chronological order. On Instagram, tap the app’s own “Following” view to get a chronological feed of just the people you follow. On X, pin the “Following” tab instead of “For You.” None of this requires a copy-paste spell. All of it is buried three menus deep on purpose, because the default algorithm is better for the platform’s ad business than a chronological feed from your actual friends. That is not a conspiracy. That is the user interface.

The deeper point is this. The copy-paste hoax is a small, sad little monument to how desperate people are to get their real friends back on these platforms and how convinced they are that some secret handshake exists. There is no handshake. There is only the algorithm, and the algorithm is not your friend. It is a slot machine designed to keep you pulling the lever. The good news is the settings to override it mostly do exist. The bad news is almost nobody knows where they are, because the platforms would rather you did not. Now you know. Go fix yours.

CAN WE TURN IT OFF?

Here is where the rational lens gets uncomfortable. Probably not, and probably not all the way, and probably not soon. Too much of modern life is welded to these platforms. Your doctor posts updates on Facebook. Your kid’s soccer team lives in a group chat. Your business gets found on Instagram and booked through a DM. Ripping it out is not like canceling cable. It is like canceling the phone book in 1995, if the phone book also knew who you voted for and what time you cried last Tuesday.

But the Pinker move here is the useful one. Rationality does not mean rejecting the tool. It means measuring it honestly and using it on purpose. The Dalai Lama adds the other half: compassion, starting with yourself. Meaning you do not need to rage-quit Instagram at midnight. You need to notice what the feed is doing to your nervous system and decide, in daylight, whether you consent.

Practical moves that actually work, based on the research and on running a small business in the real world:

Turn off notifications. All of them. The phone is a tool, not a boss.

Pick a window. Thirty minutes, twice a day. Outside that window, the app is closed.

Follow the boring experts, not the loud ones. Rage is engagement bait. Calm is not.

Before you share anything that makes you angry, wait one hour and check a second source. If it is still true in an hour, it will still be true tomorrow.

Talk to actual humans, in person, more than you scroll. This is not a metaphor. It is a prescription.

Teach the kids. The Surgeon General is not being dramatic. Three hours a day, double the anxiety, full stop.

MY CLOSING ARGUMENT

Are we pawns in the MetaVerse? Not entirely. Not yet. We are closer than we should be, and the gap is narrowing while regulators argue over who should be holding the leash. The platforms are not evil cartoon villains. They are enormous, amoral optimization engines pointed at our attention because attention is what they sell, and they are extremely good at their job. Ninety percent of what floats past you in the feed, by my unscientific but depressingly accurate field test, is bullshit. Premium grade. Carlin was right in 1990, and he is right in 2026.

The rational response is not panic. It is calibration. Use the tools on purpose. Verify before you share. Demand real regulation from the agencies who should have been on this a decade ago. Fund actual journalism with actual dollars. Look up from the screen often enough that a stranger at the coffee shop can still catch your eye and nod hello.

That last part is the Dalai Lama’s quiet point, the one that sneaks up on you if you let it. The feed wants you agitated because agitation is profitable. The antidote is not a better app. The antidote is a walk, a phone call to your mom, a conversation with someone who does not agree with you but is willing to listen. The algorithm cannot monetize that. Which is exactly why it is worth doing.

Now put the phone down for ten minutes. I will wait.

SOURCES & REFERENCES

Pew Research Center, “Americans’ Social Media Use 2025,” November 20, 2025.

U.S. Surgeon General, “Social Media and Youth Mental Health Advisory,” 2023.

DataReportal / GWI, “Digital 2025: The State of Social Media,” February 2025.

Imperva / Thales, “2025 Bad Bot Report,” April 2025.

Meta Newsroom, “More Speech and Fewer Mistakes,” January 7, 2025.

Meta Oversight Board advisory opinion on Community Notes, March 2026.

NYU Stern Center for Business and Human Rights, “Digital Risks to the 2024 Elections.”

ACM FAccT 2025, “Auditing Political Exposure Bias: Algorithmic Amplification on X.”

Alphabet and Meta 2024 annual reports; Q3 2025 earnings releases.

Chan Zuckerberg Initiative 2024 annual disclosures.

U.S. DOJ Antitrust Division forum on Section 230, April 3, 2025.

Princeton DataSpace, “How Influential is Elon Musk? An Event Study Analysis of Tweets on Auto Stock Returns.”

Bloomberg Billionaires Index, 2025 rankings.

The Intercept, “Nearly $1 Trillion: The Staggering Combined Net Worth Cheering at Trump’s Inauguration,” January 20, 2025.

Meta Terms of Service and Instagram Terms of Use, 2025 revisions.

Northeastern University, “Are Alexa, Siri, and Cortana recording your private conversations?” 2020, and “How Alexa, Siri, and Google Assistant Profile You,” March 2025.

AI Content Shield browser extension; Kagi SlopStop; DuckDuckGo noai.duckduckgo.com; uBlock Origin HUGE AI Blocklist.

Trump Media & Technology Group Corp. (DJT), 2025 Annual Report (Form 10-K) filed with the SEC.

TMTG Full-Year 2025 Results announcement, investor relations release.

Statista and DemandSage, Truth Social monthly active user estimates, 2024 and 2025.

Wikipedia and TMTG proxy filings, ownership structure (Donald J. Trump Revocable Trust).

Facebook Newsroom, “Inside Feed: Facebook 26 Friends Algorithm Myth,” February 2019.

Snopes, “Here’s How To Bypass the System Post Is a Facebook Hoax” and related fact-check articles, 2017 to 2024.